Optimizing one of the most high-stakes digital shopping experiences

Lead Researcher embedded on a design team

MY ROLE

methods

Usability testing, surveys

Increased successful credit card application rate and new accounts opened, reduced credit card declines

impact

The spark that started the project

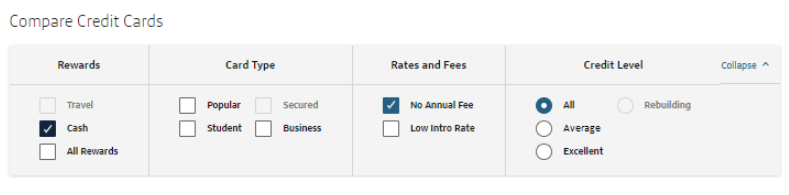

A standard, regularly-performed usability test on the bank website's credit card shopping pages revealed an odd pattern. I asked participants to browse for a product that fit their needs, and they often used the filtering tool to show only the credit cards that fit their credit level. This was normal.

However...

Several participants chose a credit level category FAR from their self-disclosed credit score range, resulting in them seeing products that were either "out of their league" or inferior to the ones they were eligible for.

Implications:

If customers don't understand the labels we use to describe their credit levels, they may accidentally choose the wrong product. Worst case scenario is that it leads to a declined application, meaning:

Losing a potential customer: The person didn't open an account with us, meaning they lose out on money they might need and we lost potential revenue

Inflicting customer pain: The person's credit score is reduced because they tried to open a line of credit. That really sucks, and they probably don't feel great about the company that denied them.

Useful background information

Trying to find a new credit card to apply for is one of the most complex individual shopping experiences: it combines the fluid UX of ecommerce with the pressures of a long-term financial contract, and the market is not known for transparency.

To make things even harder on customers,

Product selection is hard. There are a lot of complicated credit cards that look similar, even within one bank. Fully understanding the details of one card requires reading a legal document.

"Buying" isn't fully your decision. The bank needs to decide if you are worth giving a credit card to based on your credit history. The criteria for each card and each bank is a trade secret.

Stakes are high. Applying for a credit card is a high-stress moment: failure means you get punished with a lower credit score and nothing to show for it.

Most customers want to get the best product they are eligible for that meets their needs, though "best" means something different to each person.

The banking business is based on risk management: loaning money to people who have a history of not paying back loans will probably mean both the customer and the business will have bad outcomes. Banks need to carefully calibrate and protect how they make decisions about giving out credit to customers.

All banks have similar needs around "selling" credit cards:

Getting more customers. Obviously! There is a tiny caveat around not loaning too much money compared to the cash you have available, but that's not relevant here.

Customer-product matching. You want the people with really good credit and a lot of spending power to get the "best" cards, and you want people with weaker credit history to find the appropriate product for them to build up their credit.

Effectively managing a suite of products. Most places that offer credit cards have more than one product and plan on adding more. Each addition risks making product selection harder for the customer, and also eating into the profits of similar products.

The overarching business need is to find ways to enable customers to find the best credit card for their unique situation. Easier said than done.

Customer context

Business context

Research approach: answering the key questions

I try to break down every project into the key questions that need to be answered. Then, I go about answering them in an order based on what I need to know to proceed, how critical the information is for the team's next steps, and how easy it is to answer. For this work, I came up with four big questions:

Why do we have our current system of labels for the different levels of credit?

Sometimes systems exist for a good reason, even if they seem counterintuitive. Within finance, it's also possible there are regulatory restrictions that guide the words we use. Knowing where the original decision came from requires no external data collection, and could save a lot of hassle so I tackled this first through stakeholder outreach. Basically "Hey who owns this section and these labels? Can you tell me where these came from?"What is normal in the industry?

Each industry has its jargon. If an unusual word is used by everyone in a market, it's no longer weird because customers see it all the time. I did a quick competitive analysis to see how other institutions categorized credit into different levels to get a sense if external factors guide customer expectations.How do customers think about their credit?

At the end of the day the most helpful data point is the customer mental model of their credit. How it works, how good or bad it is, but especially how they describe their credit. I needed to know how people across a range of credit scores thought about their credit, so I ran a survey to capture this information and test out alternate labels.

What is the impact of the current problem on the business?

As with all work, it's important to find the overlap between customer and business needs, and there was a need to be able to answer if someone from the business asked: "why should we care?" What harm is coming out of the current experience and what is the potential gain? The original usability study that sparked this effort was small and qualitative, so there was a need to understand the scale of the problem for both customers and the business. Because I did not have direct access to product metrics, I collaborated with my product and analytics partners to get the answer to this question.

Step 1 was less about customer research and more about understanding the system and the stakeholders. I reached out to design and product partners and asked them what they knew about the credit labels. If they didn't know, I asked who should know and followed up until I reached the end of the line.

I quickly got an answer in the form of an overwhelming consensus:

“I have no idea why we use these labels. This was the system we have always had."

There was a second finding, which, paired with the first data point, was mildly alarming: This simple set of credit level categories was not a simple case of confusing copy on a website. Those labels were:

Everywhere: Every time a credit card showed up it was paired with a credit label

Across channels: The labels appeared on web, native, and in physical locations

Business critical: These categories were not just for customers; they represented the framework that the business used to align its risk models for making lending decisions

Implication: The scope of the problem was big. Really big, and making any change would have to involve a lot of different people, and there would need to be a really concrete business and customer problem to justify it.

Question #1: Why is it like this?

Question #2: What's everyone else doing?

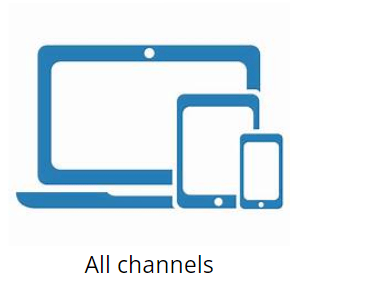

I addressed the second question with a straightforward bit of desk research (competitive analysis). I had a very simple question which was "What labels do other institutions use to describe credit levels, and what do they mean?"

This wasn't just about banks. In fact, I didn't include banks in this analysis at all for the simple reason that they could only answer half of the question. After all, no bank reveals what their credit labels actually mean.

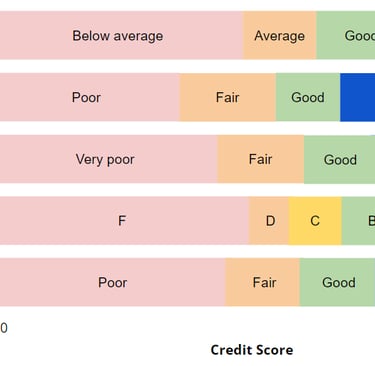

Instead, I looked to the organizations that provide a scale for credit scores and gave specific numeric ranges for what each category means. Think about credit bureaus, affiliate credit score checkers, and other organizations that provide guidance around credit scores. This let me create a very simple diagram with a very clear takeaway: There's no standard, but we're breaking most of the norms that exist.

Key Findings

Most players have 4 or 5 tiers of credit levels, instead of 3 (We probably need more)

Almost every system uses the word “Excellent” (Yay, we're matching expectations here!)

No one uses the words “Average” or “Rebuilding” (We're different in probably a bad way)

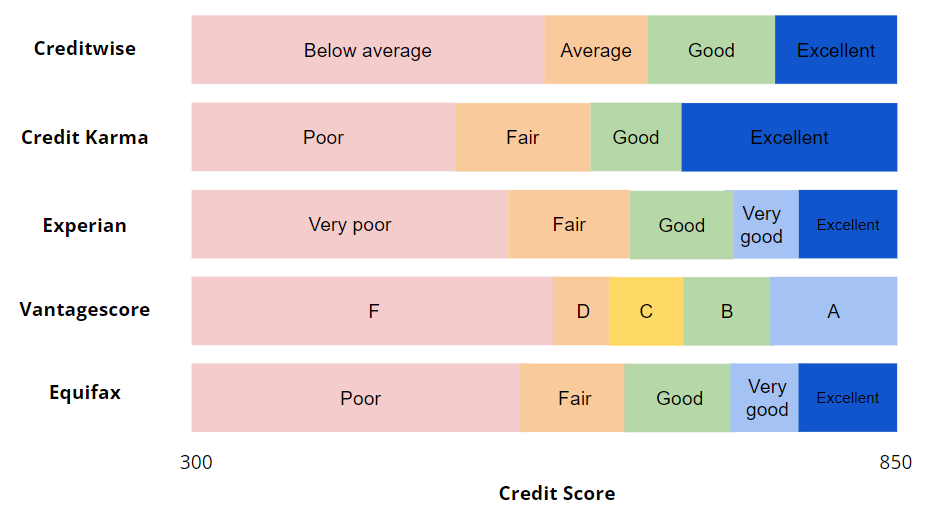

This was the first "real research" activity in terms of collecting customer data. I wanted to know how people thought about their credit level, and what words people naturally used to describe it. Importantly, this would have to include people across the credit spectrum. Because I needed customers to generate their own words, this was a mixed-methods survey: most of the questions were open-ended, and I needed to do some data cleaning before doing any type of analyses or visualizations on it.

Here are some of the critical questions included in the survey:

1. What word would you use to describe your credit level to a friend?

2. Imagine you are in a bank looking to learn more about their credit cards. How would you describe your credit level to the banker in one word?

3. What word describes someone's credit score that is ...[compared to yours]

-Much better?

-Moderately better?

-Moderately worse?

-Much worse?

4. What word describes the credit scores in the following ranges? Feel free to use the same word for more than one range. (credit ranges redacted)

-300-xxx

-xxx-xxx

-xxx-xxx

-xxx-xxx

-xxx-850

You can tell I'm trying to get at basically the same question from a couple of angles, and trying to find out if there are any psychological factors that influence how people think about credit. (For the psychology folks, Q1 & Q2 are poking at social situations and motivational factors, Q3 is looking at relative comparisons).

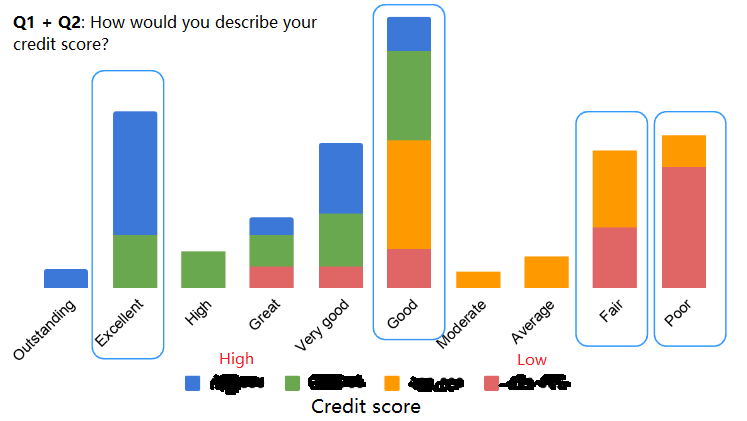

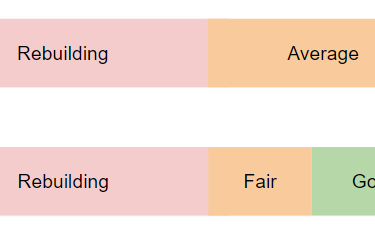

I won't bore you with all the charts, but if you had to see one it should be this one:

Key findings and implications:

1. “Excellent” resonates with those at the highest credit levels (this label works well)

2. Very few people use “Average” and no one uses “Rebuilding” (2 out of our 3 labels are non-intuitive for customers)

3. “Good,” “Fair,” and “Poor” are potentially good labels, assuming that the people who self-selected into those categories have the credit scores that match our risk models (Recommendation: consider these as the new labels beneath "Excellent")

Question #3: How do customers think?

Note: I redacted the credit scores for confidentiality; all you need to know is that blue means highest and red means lowest. There wasn't a big difference in how people answered Q1 and Q2, so I combined the answers into a single chart, separating the responses by the participants' self-reported credit range.

Intermission: Summing up what we know

Do case studies have intermissions?... Well, this one does. I want to take a pause here to recap what we've learned at this moment. This matches onto an actual milestone during the work itself, which was the moment where the design team and I shared our findings with our product and analytics partners to get their feedback, and also their support with the final question. This is the narrative I crafted from answering the first 3 questions, in a nutshell.

We found a problem: We discovered that some customers are misinterpreting our credit labels when they shop for credit cards, which could cause them to apply for products outside of their range.

The problem is likely widespread: Customers across the credit spectrum all seem to think about their credit in a way that doesn't match the language we use today.

Consumers have no idea what the average credit score is. "Average" relies on customers' perception of how their credit compares to everyone else; this is often inaccurate. We're likely to have people both above and below the desired credit range self-selecting into this category.

"Rebuilding" is a word signaling intent, not level. Someone with great credit who took a small hit to their score might consider themselves "rebuilding"

There's no reason for us to keep the current state: We encountered no rationale, competitive strategy, or regulatory guidance that says we need to keep these specific labels. We stand out in a bad way in the credit industry.

We believe the problem has a huge business impact, but we'd love to make sure: Here is where we asked for assistance from our analytics partners to help us dig into whether customers are actually behaving in a way that indicates they misunderstood the credit labeling system. Luckily, our analyst partners had just the data to help us with that and were keen to figure out how big the opportunity was.

Quick note, the story will get more vague and condensed here. Two reasons for this: First, it's an analysis done by someone else, so it's not really MY contribution. More importantly, the details of this are super confidential so I'm going to just tell you the juicy parts. Read on!

The analysis of actual card shopping and application behavior began, and the result confirmed our suspicions: People are searching for and applying to the wrong credit cards!

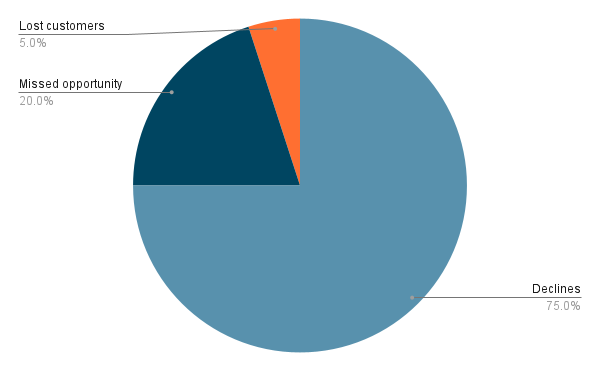

I'll break the problem down into 3 parts, 2 of which we could confirm (and size), one which we could assume was happening:

Question #4: Should the business care?

1. Unnecessary card declines: XX% of credit card applicants are being declined for credit cards when they apply, and Y% of those declines would have qualified for another (lower tier) credit card that we offer.

Implication: We're losing out on real potential customers and giving them a really bad day, which could harm the brand in addition to the business.

2. Missed opportunity for a better product: XX% of applicants were approved for the credit card they applied for, but Y% could have gotten a better credit card if they only applied for it.

Implication: Customers lose out on better products that they could have been eligible for, and we lose the potential revenue from people who have the spending power.

3. [Suspected, but not confirmed] Lost customer interest: XX% of card shoppers came to explore our credit cards, but upon seeing the products they assumed were eligible for them based on the credit label, decided they no longer wanted to apply.

Implication: If true, we could be losing customers before they even apply because the product they think is the closest match for them is either not interesting, or appears to be out of their reach.

Note: As I mentioned, this was a speculation on my part, I brought it up in conversation but it did not have a noticeable impact on the discussion or the decisions that came afterwards because of the difficulty of accurately estimating how many people changed their minds because of a single word. Plus, the other two findings were big enough of an impetus for action.

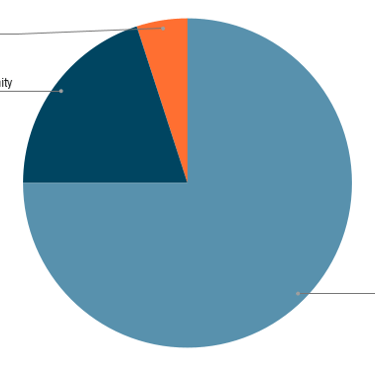

[Fake data] showing the relative sizing of the opportunity cost of not fixing this problem, measured by number of customers and a subsequent estimated revenue by product type. The category of lost customers was not quantified, but I didn't want it to feel left out of the pie chart.

Outcome and impact

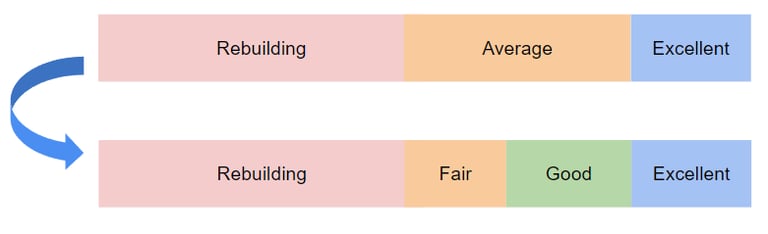

It took around 6 months to make the change, but the actual difference is really straightforward to show:

There were two changes:

"Average" changed to "Fair"

A new credit category "Good" was introduced, sitting on the border of "Fair" and "Excellent"

If this seems like a tiny shift to you, remember this wasn't about the copy on a single web page. This changed how products were defined across a huge part of the site map and impacted the way the bank assessed credit risk.

Focusing on the single wording change from "Average" to "Fair," this seemingly tiny change had big effects:

Better shopping accuracy: People were clicking on the credit level filters appropriate for their credit level at a greater rate

Better product selection: Shoppers were more likely to apply for cards within their range, meaning:

More customers: More accounts opened with no change in number of applications

Fewer declines: Reducing the rate of bad customer outcomes

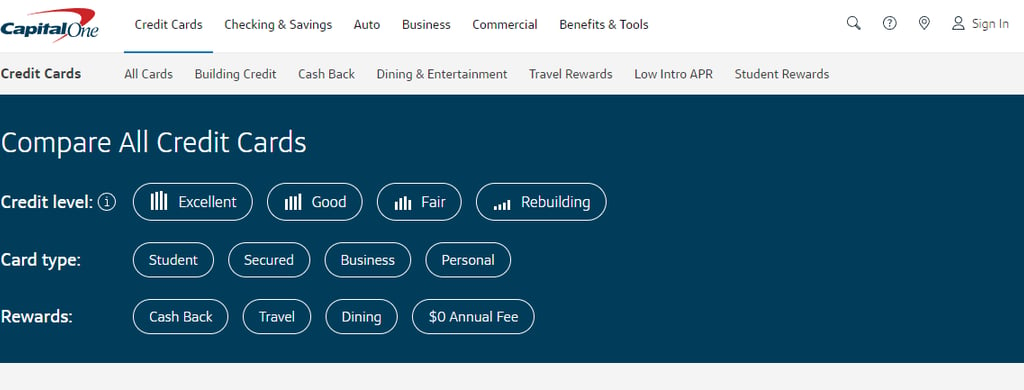

I can't share the numbers here, but suffice it to say that these changes delivered on the promise that convinced the whole enterprise to make a coordinated shift simultaneously, and no further changes to this system have occurred (outside of the addition of icons, which came from a smaller study I ran) in the 5+ years since it took place, even though over a dozen new products were added to the company's portfolio in that time.

The current credit filters on the bank's site as of May 2024.

Reflections

Looking back on this work, a few thoughts come to mind; some lessons learned.

1. There is often a perceived distinction between tactical and strategic work, mapped to the scope of what you are working on. I think there is certainly a relationship between your strategic impact and how much of the experience is in your purview: most practitioners would assume changing the color of a button on a page is less strategic than changing the value proposition, the messaging tone, or the target customer. However, at the end of the day, truly strategic moves can look tiny but have a massive impact, and draw from a well of deep customer understanding. Just take this work as an example. I nearly titled the project "A single word that was costing millions." I'm still considering it.

2. As much as people talk about being "data-driven," people are still people and the emotional part of decision-making plays a heavy part in how we think. If you made it this far, you might have noticed something in the outcome that didn't quite match the research findings: Why is "rebuilding" still there? Arguably, the data showing that "rebuilding" was not intuitive and was creating customer and business problems was just as strong as the data showing the same thing about "Average." The reason that "Rebuilding" was not changed to "Poor" was not due to its ineffectiveness in testing; it was a non-starter because people were concerned about the optics and consequences of labeling customers as "Poor," even though participants organically generated that label to describe their own credit. With the benefit of hindsight, and if I had had more time on that team (I moved to a different team after this saga), I would have followed up with the decision makers on this effort, and potentially perform extra qualitative research showing that customers with lower credit scores had no negative perceptions of brands that offered such a label, or assigned one to them.